Discovery Center - Gesture controlled robot

| Sponsors | |

| Team Name | U of I Discovery |

| Duration | Fall 2018 - Spring 2019 |

| Faculty Adviser |

|

| Mentors |

|

| Client |

|

| Team Members |

|

The goal of the project is to equip the Discovery Center of Idaho with their first fully functioning, motion actuated robotics exhibit. This exhibit will feature a robot arm that can be controlled using hand gestures as well as a variety of activities that users can complete based on different skill tiers (beginner, intermediate, and advanced).

Problem Definition[edit | edit source]

The Discovery Center of Idaho is looking to incorporate interactive exhibits that expose K-12 students to modern robotics technology. This project features a four degree of freedom robot arm that can perform a number of sorting activities based on hand motion in free space, without direct physical contact with an actuator.

Background[edit | edit source]

Currently, the Discovery Center of Idaho does not have a robotics exhibit to show. This project will allow children and patrons of all ages to learn about robotics as well as interact with a live robot that they can control using only their hand gestures.

Deliverables[edit | edit source]

- Fully assembled robot arm with leap motion sensor

- Software program to control robot

- Documentation on how to operate/ maintain/ troubleshoot the system

Specifications[edit | edit source]

- Robot arm must be compact and transportable

- 2-3 activities that the robot arm can perform based on user skill

- Beginner

- Intermediate

- Advanced

- User must be able to control robot using a Leap Motion controller

- Activities must be able to be reset easily for the next user

- Parts used (actuators, microcontrollers, etc.) must be easily replaceable and maintainable

- Exhibit must be safe for all ages to operate

Project Learning[edit | edit source]

Leap Motion[edit | edit source]

The Leap Motion controller is a motion tracking device that can be used to track hand gestures. The controller is capable of tracking an individual's palm, forearm, and each individual finger joint. In addition, the sensor can track two hands at a time. This controller tracks hand gestures using two different cameras, and is found to perform better when overhead light is at a minimum.

Servo Diagram[edit | edit source]

This diagram shows the configuration of the servos used to actuate the robot arm.

- Servo 1 controls the base rotation

- Servos 2 and 3 control shoulder movement

- Servos 4 and 5 control elbow movement

- Servo 6 controls the vertical movement of the wrist

- Servo 7 controls the wrist rotation

- Servo 8 controls the end effector

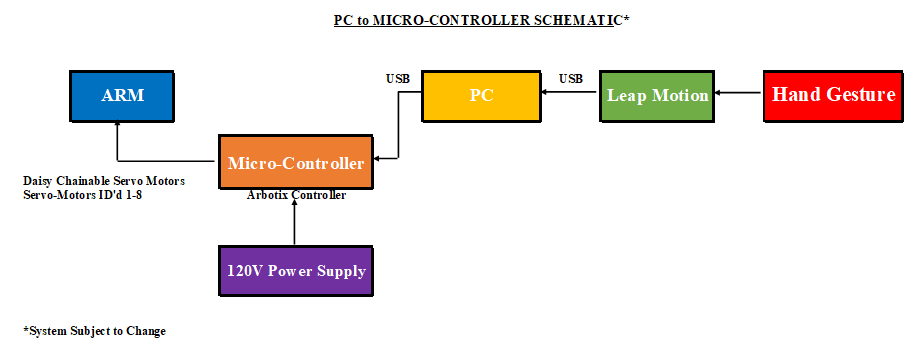

System Diagram 1[edit | edit source]

This diagram shows the system using a Arbotix micro-controller to control the servos and provide the robot with power.

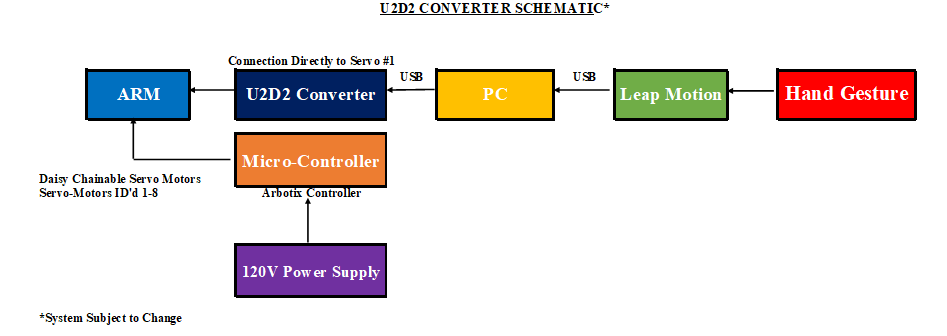

System Diagram 2[edit | edit source]

This diagram shows the system using a U2D2 USB converter, which would subvert the micro-controller to control the servos.

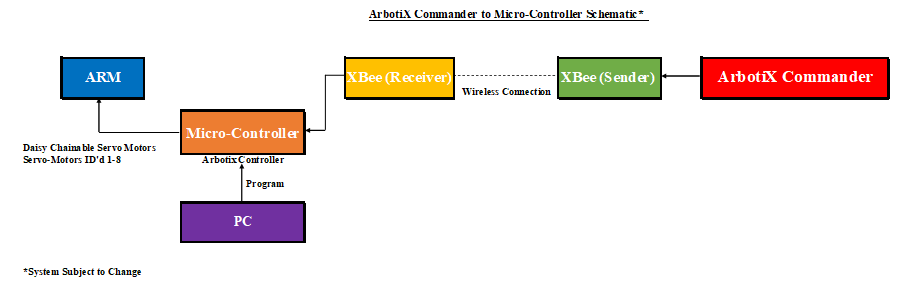

System Diagram 3[edit | edit source]

This diagram shows the system using an XBee receiver for a wireless hand-held controller, replacing the Leap Motion.

XBee wireless controller[edit | edit source]

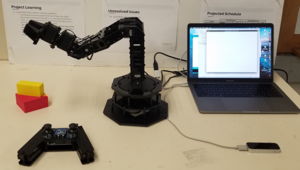

In order to learn how the robot responds to certain inputs, we opted to try and control the robot via a handheld remote. We bought the remote from the robot's manufacturer and used the code provided to gain insights into how we could eventually use the Leap Motion controller.

Final Design[edit | edit source]

For our final system design, we decided it would be best if we used the Arbotix micro-controller since it reduced complexity and freed the U2D2 device to be used to ID other servos.

Final design system diagram[edit | edit source]

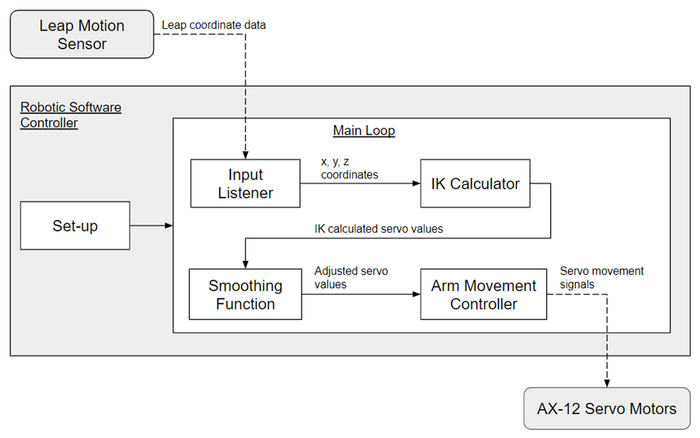

Software Diagram[edit | edit source]

Project Progress[edit | edit source]

XBee[edit | edit source]

We were able to successfully control the robot using the XBee controller and demonstrated this at snapshot #2.

Gripper Modification[edit | edit source]

Since the size of our initial gripper was inadequate, we designed and 3D-printed a new, wider gripper so that we could grasp larger objects.

| |

Inverse Kinematics functionality[edit | edit source]

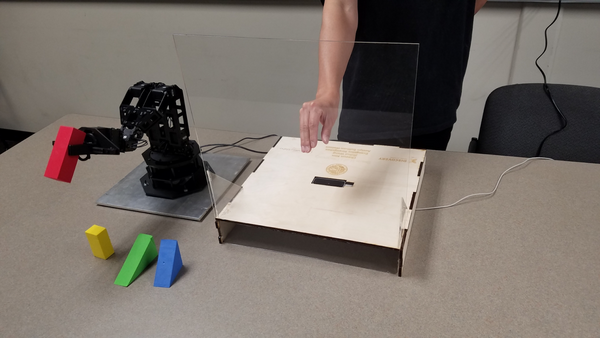

We were able to implement the use of inverse kinematics to move the robot arm using the Leap Motion controller by retrofitting the code from the XBee controller to use the input coordinates from Leap Motion.

Gripper functionality[edit | edit source]

We were able to control the gripper by tracking the thumb and other digits and then mapping the distance between the digits to a range of servo values.

Full Functionality Testing[edit | edit source]

The following photo shows the robot being operated and tested prior to Senior Design Expo.

Design Validation[edit | edit source]

Leap Motion[edit | edit source]

| Call Out | Test | Target Date | Completed | Performance | Result | Actions |

|---|---|---|---|---|---|---|

| Base Servo-Motor Actuation (Servo-Motor ID #1) | Free Rotation, with the 300 Degrees of Motion, Using leap motion to rotate the arm 90 deg. w/ hand motion | 11/1/2018 | 10/24/2018 | Completed, with refinements on the way. Actuation occurs, though it could be improved. | Good | Purchase the U2D2 Converter, in hopes of reducing the amount of conversions needed. Look into reducing latency. |

| Rotation using Servo-Motor ID #1 on the base via Arduino Software. | 11/1/2018 | 11/1/2018 | Completed, with refinements on the way. The Arm actuates by itself to the upright position, through Arduino Coding | Good | Continue progress, and look into actuating the arm through Inverse Kinematics through Leap Motion. Look into reducing latency. | |

| Rotation using Servo-Motor ID #1 on the base via Arduino/C++ Code Modification | 2/13/2019 | 1/30/2019 | Completed, base servo actuates, and moves side-to-side, mirroring user input. Latency isn't detrimental | Good | See if reaction speed can be improved, but more than anything, functionality is of highest priority | |

| Shoulder Servo-Motor Actuation (Servo-Motor ID #2 & #3) | Use Arduino Coding to activate the robotic arm into the upright position | 11/1/2018 | 10/24/18 | Completed, with refinements on the way. The Arm actuates by itself to the upright position, through Arduino Coding | Good | Continue progress, and look into actuating the arm through Inverse Kinematics through Leap Motion |

| Lateral Motion upwards and downwards, following user input via C++ Coding to Leap Motion Controller | 2/13/2019 | 1/30/19 | Completed, shoulder mirrors what the individual inputs to the Leap Motion Controller | Good | See if reaction speed can be improved, but more than anything, functionality is of highest priority | |

| Elbow Servo-Motor Actuation (Servo-Motor ID #4 & #5) | Using Arduino Coding to activate the robotic arm to the upright position. | 11/1/18 | 11/1/18 | Completed, with refinements on the way. The Arm actuates itself to horizontal/vertical position, through Arduino Coding | Good | Continue progress, begin looking into how to make the arm actuate smoother, transition wise under Leap Motion Control |

| Using C++ Coding w/ Leap Motion Sensory device, we use upward and downward hand motions to test functionality | 2/13/19 | 1/30/19 | Complete, the elbow mirrors user input, just like the base and shoulder. | Good | Refinements in the overall movement, via restrictions on certain movements and over-corrections, so that we can reduce risks of broken parts, and any unneeded wear and tear on the robotic arm | |

| Wrist (Lateral Motion) Actuation (Servo-Motor ID #6) | Using Arduino Coding to activate the robotic arm to and upright position. | 11/1/2018 | 11/3/18 | Actuation occurs and it is seamless | Good | Continue progress, now see if we can attempt to do the same thing through inverse-kinematics (IK) |

| Wrist Rotation Actuation (Servo-Motor #-NULL) | Removed w/ DCI's Permission, due to possible issues with miscommunication between Leap Motion Device and Hand Position | 2/13/2019 | N/A | Removed and replaced with 'Faux' Servo-Motor that maintains similar weight, and arm extension, without compromising gripper performance | Good | N/A |

| Gripper Actuation (Servo-Motor ID #7) | Use U2D2 converter to run Dynamixel Software, to see maximum and minimum gripper opening | 2/27/2019 | 2/27/19 | Minimum and Maximum Gripper Operating width, found and specified within the code. | Good | None |

| Set limits in code, just below maximum and minimum for Servo #7, then test the code via Leap Motion Controller | 3/1/2019 | 3/3/19 | Smoother Operation from Minimum to Maximum opening of the gripper | Good | Smooth function, though it differs for different block size. Make alterations to minimum gripper opening vaule, so that the servo isn't overworked | |

| Implementation of U2D2 Converter Directly into Base Servo Motor | Using Leap Motion and Inverse Kinematics Equations (w/ help of Dr. Perry) to actuate robotic arm | N/A | N/A | N/A | N/A | No ability to actually implement such software due to the fact that it wouldn't necessarily provide better results than that of the Arduino/C++ Configuration already being run. |

| Implementation of the ArbotiX Commander v2.0 Controller | Movement/Resolution and overall testing of the Servo-Motor's Actuation, before continual progress with Leap Motion | 11/29/2018 | 11/28/18 | Completed, motion is somewhat lagging, might be able to increase reaction time | Good | Continue progress, and see what can be done before Snapshot Day #2, and introduction to Ralph. Gain insight from Arduino code and see how it can be implemented to make Leap Motion work |

| Unit Test for checking servo-motor actuation through automated program | Submit the code through either a C++ or Arduino format to test/debug any actuation errors within servo-motors | 2/22/2019 | 2/27/19 | Servo-Motors work, and will provide any feedback by individually moving to specified test location. | Good | Keep this software for any troubleshooting tat may be needed in the future, for the group and for DCI. |

Micro-controller[edit | edit source]

| Call Out | Test | Target Date | Completed | Performance | Result | Actions |

|---|---|---|---|---|---|---|

| Base Servo-Motor Actuation (Servo-Motor ID #1) | Actuates the Arm into the Upright Position, before rotation ensues | 11/1/2018 | 10/24/2018 | Completed, with refinements on the way. Actuation occurs, though it could be improved. | Good | Look into implementing the Leap Motion Device through the U2D2 Converter or ArbotiX Micro-Controller |

| Shoulder Servo-Motor Actuation (Servo-Motor ID #2 & #3) | Actuates the Arm into the Upright Position, before rotation ensues | 11/1/2018 | 10/24/18 | Completed, with refinements on the way. The Arm actuates by itself to the upright position, through Arduino Coding | Good | Look into implementing the Leap Motion Device through the U2D2 Converter or ArbotiX Micro-Controller |

| Elbow Servo-Motor Actuation (Servo-Motor ID #4 & #5) | Actuates the Arm into the Upright Position, before rotation ensues | 11/1/2018 | 10/24/18 | Actuation occurs and is seamless with the hard coding | Good | Look into implementing the Leap Motion Device through the U2D2 Converter or ArbotiX Micro-Controller |

| Wrist (Lateral Motion) Actuation (Servo-Motor ID #6) | Actuates the Arm into the Upright Position, before rotation ensues | 11/1/2018 | 10/24/18 | Actuation occurs and is seamless with the hard coding | Good | Look into implementing the Leap Motion Device through the U2D2 Converter or ArbotiX Micro-Controller |

| Wrist Rotation Actuation (Servo-Motor #-NULL) | See Explanation Above within Leap Motion Section | N/A | N/A | N/A | N/A | N/A |

| Gripper Actuation (Servo-Motor ID #7) | Actuates the Gripper laterally with Servo-Motor rotational motion | 2/27/2019 | 3/3/19 | Actuation Occurs and is seamless with the hard coding | Good | Look into implementing specific movement of the Gripper, by limiting the closing of the Gripper, thus reducing Servo-Motor freezing |

| System Operation | Implement IIR (Infinite Impulse Response) Filter Code, to help facilitate more seamless and smooth response w/o adding pauses within the system | 4/2/2019 | 4/5/19 | Actuation Occurs, is smoother, and is definitely refined. Works much better than the pauses that were within the code previously. | Good | Make actuation even more seamless by tweaking the IIR Filter's equation. Change it slightly in one aspect of the robot arm, and see how the robot are actuates. |

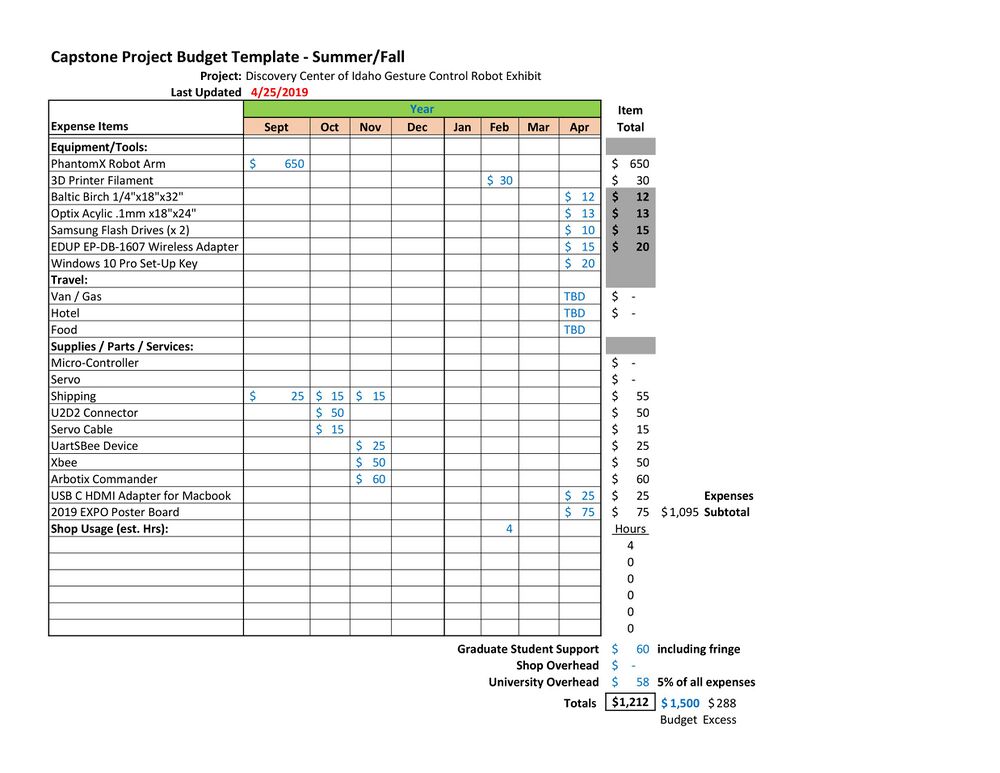

Budget[edit | edit source]

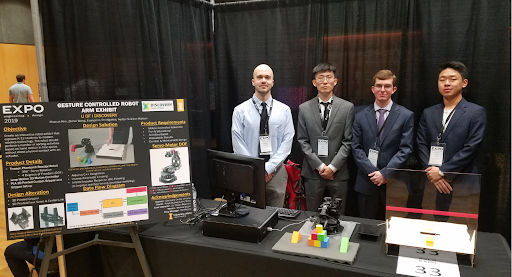

Design Expo[edit | edit source]

Team Members[edit | edit source]

| Evangelos Stratigakes

Major: Computer Science

| |

| Zhihui Wang

Major: Mechanical Engineering

| |

| Chaeun Kim

Major: Computer Science

| |

| Austyn Sullivan-Watson

Major: Mechanical Engineering

|

Additional Documentation[edit | edit source]

GitHub[edit | edit source]

Project Schedule[edit | edit source]

Presentations[edit | edit source]

Design EXPO Poster[edit | edit source]

File:DCI Capstone Design Poster Final Design.pdf