Speech Therapy Application

The goal of this project is to create a speech visualization tool that can analyze speech and provide visual feedback as well as an error report. This should have a modular design that can be easily added to in the future.

| Sponsor | Micron |

| Mentors | Bruce Bolden & Feng Li |

| Team Name | Smooth Talkers |

| Duration | Fall 2017 - Spring 2018 |

Problem Definition[edit | edit source]

Using CMUSphinx, an open-source ASR toolkit, we will analyze speech files to detect errors, and we will develop a user interface in Java.

Background[edit | edit source]

There have been many projects in recent years having to do with analyzing pronunciation errors. Many of these have used CMUSphinx. Our project is different in two ways. First, our project will be developed with a specific aim toward working with child speech. Second, we aim to take the current state of the art and improve on it.

Specifications[edit | edit source]

| Metric | Importance (1 = More, 5 = Less) | Units | Marginal Value | Ideal Value |

| Real time feedback | 3 | Seconds | 60 | 5 |

| Textual error response | 1 | Coverage | Common Errors | All Errors |

| Audio error response | 2 | Coverage | Common Errors | All Errors |

| Child speech samples for testing database | 4 | Samples | 100 | 200 |

| Child speech samples for training database | 4 | Samples | 400 | 800 |

| Working acoustic model | 1 | Error Rate | 20% | <15% |

| GUI Interface | 1 | x | x | x |

| Cross system compatability | 5 | OS | Windows | Windows, Mac, Linux |

| Handles low ambient noise | 4 | decibel | 40 | 65 |

| Spectrum Analysis (FFT) | 1 | x | x | x |

| Waveform Visualization | 1 | x | x | x |

| Additional speech analysis | 2 | Graphs | Few | Many |

Project Learning[edit | edit source]

CMUSphinx[edit | edit source]

CMUSphinx is an ASR toolkit which we are using for this project. It uses the most state-of-the-art algorithms based on decades of CMU research.

Speech Recognition[edit | edit source]

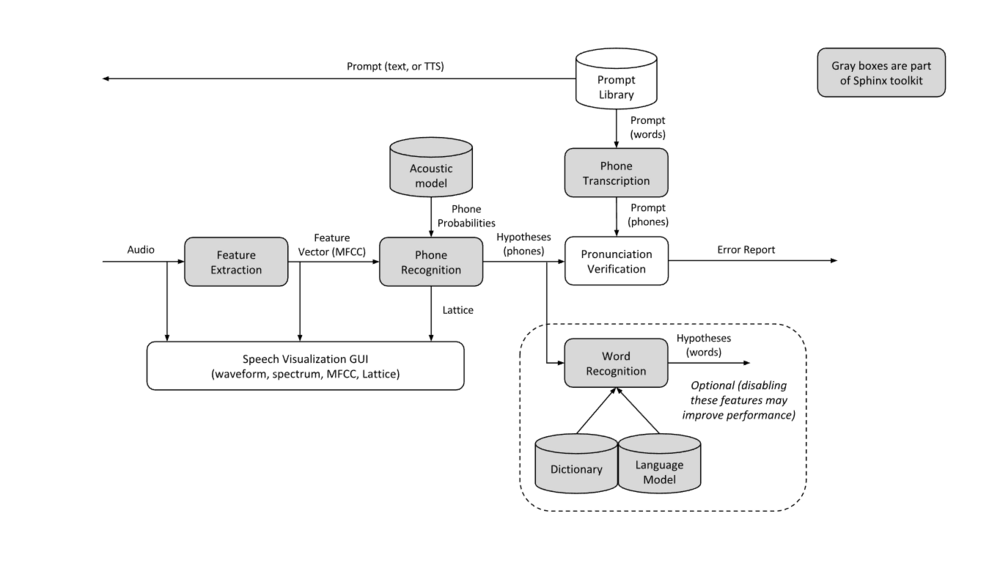

Speech recognition consists of multiple steps. First there is feature extraction, forming a feature vector. Then, this vector is matched to the acoustic model.

Lattices[edit | edit source]

Lattices are directed graphs. The nodes represent the word or phone that happened at a given time. The edges represent the scores of one word/phone following another. Lattices tend to use the best hypothesis to represent alternate results.

In Sphinx4, the lattices are built using the hidden markov model (HMM). However, there are extra tokens added in a token chain when determining the answer from the HMM. These include unit tokens and HMM tokens--the other token in the token chain is word tokens (and hopefully phones). The lattice condenses the token chains into word tokens and path tokens. These path tokens contain the scores between each pair of words.

Microphones[edit | edit source]

The choice of a microphone took many factors into account. This program’s eventual goal is to be used in schools or people’s homes. Knowing that majority of users wouldn’t have access to expensive audio equipment the team decided to set a soft budget of $50 for a microphone. Using an inexpensive microphone will allow the team to test the program using audio quality that would be reasonable for a user to achieve. The final decision was to use the Blue Snowball Microphone.

The Snowball offers a great balance of audio quality and price. A cardioid recording mode is also included which only records audio directly in front of the microphone, useful to prevent unwanted sounds during recording. USB connectivity ensures that the mic should work on nearly every computer. The mic also comes with a small tripod, making it much more portable than a microphone requiring a boom arm mounting system.

This setup will allow for a portable audio recording system that provides quality audio recordings to train and test the programs acoustic model.

Speech disorders[edit | edit source]

Speech Sound Disorders, or SSDs, affect 10-15% of preschoolers and 6% of school-aged children. The majority of these have unknown causes.

There are two major categories of speech disorders: phonological disorders and motor speech disorders. In phonological disorders, patients are physically capable of producing the correct sounds. In motor speech disorders, patients physically cannot produce the correct sounds and must be shown how. Our software will be more helpful to the former category.

Relevant data for diagnosis includes omission and distortion of phonemes, stress errors, speed of speech, and consistency.

It's important to test phonemes alone, in syllables, in phrases, and in spontaneous speech. The results often differ between these contexts, and that information can be valuable to diagnosis.

Design[edit | edit source]

We originally used Pocketsphinx with Python, but then discovered that Sphinx4 with Java is 200x faster, most likely due to optimizations implemented after the release of Pocketsphinx.

Flowchart:

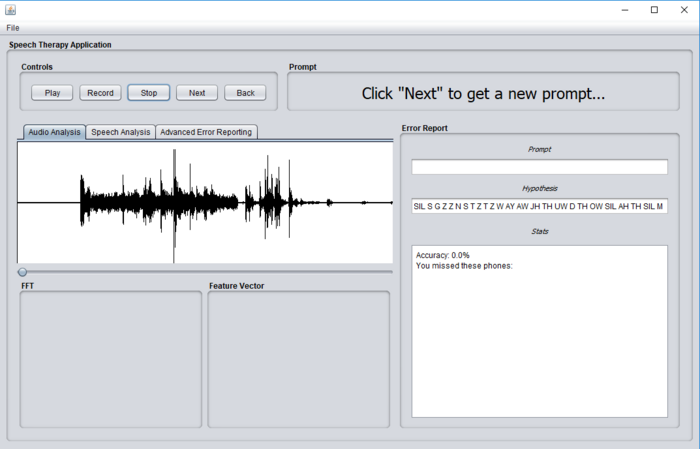

The Graphical User Interface will be written in Java and will look something like this:

Error Reporting[edit | edit source]

The Wagner-Fischer Algorithm[edit | edit source]

The Wagner-Fischer is algorithm is an algorithm used to find the edit distance between two strings. The edit distance is the minimum number of edits (including deletions, additions, and substitutions) required to transform one string into the other. The Wagner-Fischer algorithm can be used to measure the accuracy of individual trials.

F1 Scores[edit | edit source]

F1 scores compute accuracy of a test based on true positives, false negatives, and false positives. The formula looks like this:

<math> \frac{2*true positve}{2*true positive + false negative + false positive} </math>

In using this formula to compute phoneme-level accuracy: when the user pronounces a phoneme correctly, it counts as a true positive for that phone; when the user fails to pronounce a phone, it counts as a false negative for that phone; and when the user pronounces a phone that should not appear in the phrase, it counts as a false positive for that phone.

FFT[edit | edit source]

FFT is short for Fast Fourier Transform. The transform is based on Fourier transforms, which allow a signal to be converted from the time domain to the frequency domain. This transformation allows an audio signal to be broken down and viewed as frequencies and how much of each is present in the signal. Fourier transforms provide this output, but traditional computation methods take a long time. FFTs use an algorithm which produces the same results but can be computed in much less time. This project is designed to provide instant feedback to the user, so using a FFT was the only real choice.

Training and Testing[edit | edit source]

In order to train a language model to better recognize child speech, we used the sphinxtrain package along with a database curated by the Center for Spoken Language Understanding.

The sphinxtrain package allows for both training new language models and adapting the default model. We found significant improvement over the default model with both methods, but slightly better improvement with the adapted model.

Team Information[edit | edit source]

| Member | Biography | Discipline |

| Simon Barnes | Simon Barnes is a senior Computer Engineer at the University of Idaho. Interest in the project comes from his mother's involvement working with children who have speech disabilities as well as the programming involved for speech recognition. His hobbies include cars, technology, and Super Smash Bros Melee for the Nintendo GameCube. | Computer Engineer |

| Emma Bateman | Emma Bateman is a senior Computer Science major at the University of Idaho. She is interested in machine learning and wants to learn more about speech recognition. | Computer Science |

| Joshua Bonn | Joshua Bonn is a senior Computer Science major at the University of Idaho. He is interested in this project because of his own issues with speech and his learning through speech therapy as well as an interest in machine learning. His interests include video games and music. | Computer Science |